Did the COVID-19 crisis bring us closer to ‘robots taking over’?

See the experts’ views and find out more about how this position paper was produced.

An Unmanned Aircraft System (UAS) is a system in which an unmanned aircraft is operated. It includes elements such as a ground control station, data links and other support equipment. The aircraft itself is flown without a Pilot-in-command on-board. It can be remotely controlled from another position, with varying levels of automation up to “fully automated”.

Although it is true that computers can do certain things better than humans, they are only as good as their system design. Taking the human pilot out of the loop removes a significant safety resource. While humans may introduce some failure-scenarios, they at the same time eliminate system-failure scenarios and act as a backup for failed systems, bridge technology-gaps and adapt in real-time to unknown situations. Whether an automated system can adequately compensate for this - is highly questionable. Additionally, with the reduction of input by human pilots, the risk of system associated threats increases.

Combine this with a growing complexity of UAS, and accident rates might actually go up, instead of decreasing. In line with the Safety-II approach by E. Hollnagel2 overall system safety is not merely a minimization of ‘bad outcomes’, but also a maximization of ‘good outcomes’. The details of how much humans contribute to ‘good outcomes’, and in how far automation might affect this, especially in highly complex and complicated systems such as UAS’s, have yet to be explored; but it is a crucial aspect that needs to be taken into account.

In view of the above, and in addition to the technical challenges, the current increase in automation levels in UAS should be treated with great caution. In addition to the technical challenges, the debate about the process of moving towards increased levels of automation should focus more on the safety aspects. As we move towards integrating the first fully automated UAS with manned aviation together in the same airspace, we must ensure that the high current manned aviation safety levels are not negatively impacted.

Depending on the definitions used, autonomy is placed at the highest levels of automation. The ICAO Manual on Remotely Piloted Aircraft (RPAS) (Doc 10019) does not contain a definition of automation. It does however contain the following definitions of autonomy:

ICAO talks about a remotely piloted aircraft but considers autonomy out of scope for the RPAS Manual.

ECA finds the use of the word “autonomous” often somewhat inflated, when the system referred to is not autonomous, but rather highly (perhaps fully) automated. Because of this, as well as the complexity of the issue, words like autonomous, unmanned or robotic are used inconsistently to describe fully automated systems and most of the time ‘fully automated’ would be the appropriate term.

The definition of automation is a technique, method, system of operation or controlling a process by a highly automatic means, reducing human intervention to a minimum.

In its ‘purest’ sense, autonomy refers to an independent system, which is self-governing and has the power to make its own decisions. An autonomous UAS would determine its own missions, make its own decisions during the missions, do its own strategic planning and would be truly self-deterministic and in command of itself.

ECA believes autonomous UAS in the above sense are realistically not feasible in the near- to mid-term future. ECA further questions whether autonomous UAS are even desirable, as this would ultimately mean to relinquish the command authority entirely to a system. The potential ramification of this involve fundamental philosophical, legal, safety, security and societal issues. These need to be meticulously analyzed and discussed in depth, before such systems are introduced into the common airspace.3

With the focus of this paper being on automation, a more in-depth look at different levels of automation is required.

Trying to define the level of automation of a system can be difficult, as often not the entire system is automated, but only subtasks within the system. Also, an UAS is a complex system with numerous subsystems like navigation, communication, caution and warning systems, etc. All these subsystems may have a different level of automation.

A balance must be found between the technical feasibility to automate – including the necessary effort, the integrity and reliability of the automated system which defines how much human operators trust the levels of automation – and how much benefit high levels of automation provide.

In its AI roadmap, EASA advocates that ideally automation should be used to reduce the use of human resources for tasks a machine can do, thus allowing the human to better concentrate on high added-value tasks, in particular the safety of the flight. Accordingly, humans should be put at the center of complex decision processes, assisted by the machine.

ECA fully agrees that humans should be put at the centre of complex decision processes. However, we caution against a decision to turn over certain tasks from humans to machines based only on the machine’s capacity to perform the task. Such decision must rather be based on an in-depth analysis of what the optimal human ↔ machine interface is. Reducing humans to ‘system-monitors’ may ultimately reduce safety-levels, as humans are not very good at monitoring tasks over longer periods of time. The impacts of human performance limitations must be addressed in the human ↔ machine interface.

In practice this currently results in an intermediate level of automation, somewhere between an RPAS with full human authority, and a fully automated UAS where all decisions are made by the computers.

Advancements in computer technology, especially in the area of machine learning, make an increase in the automation levels of UAS very likely in the near future. However, even the most advanced systems have shown erratic and non-predicted behavior. A system to ensure safe and compatible automatic behavior at acceptable levels of integrity and robustness - is yet to be developed. A more formalized process surrounding machine learning is required to ensure the automated operation(s) are performed at adequate levels of safety, reliability, integrity and robustness.

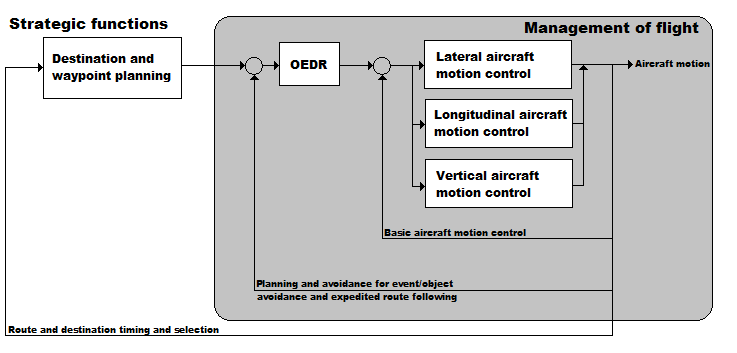

The UAS operation consists of strategic functions combined with the management of flight (see Figure 1). When discussing levels of UAS automation, this document refers to levels of UAS management of flight automation. The management of flight includes basic aircraft control, as well as tactical functions. The tactical functions, which consist of planning and execution for event/object avoidance and expedited route following, are defined as OEDR (Object and Event Detection and Response). These are subtasks of the UAS management of flight that include monitoring the environment, detecting, recognizing, and classifying objects and events and preparing to respond as needed, as well as executing an appropriate response to such objects and events as needed to complete the operation.

As stated above, one of the problems faced by UAS will be classifying the different levels of automation. This might for example be needed for certification, compliance with regulations, insurance coverage or to define the necessary competence of the pilot.

Currently there is no international standard for determining the level of automation of an UAS. Table 2 tries to clarify the different levels of automation, based on SAE Internationals’ J3016 Levels of Driving Automation standard for consumers4 and adapted to fit UAS operations. The J3016 standard defines six levels of driving automation, from SAE Level 0, no automation, up to SAE Level 5 full vehicle automation. It serves as the industry’s most-cited reference for automated vehicle capabilities. It is important to note that the table refers to the UAS as a whole and the overall level of automation reflects the lowest level of its respective subsystems/-components.

1 See page 5-6 for SAE J3016

2 Hollnagel, Erik (2014). Safety-I and Safety-II The Past and Future of Safety Management. Ashgate. CBC Press. Boca Raton, FL, USA.

3 ECA takes note of EASA’s Artificial Intelligence Roadmap (February 2020), and has a dissenting view on several concepts as outlined in the paper. That includes autonomous flights, described in the Roadmap as fully automated flights, and projected as the ‘holy grail’ of aviation’s future.

4 SAE J3016 Levels of Driving Automation standard for consumers https://www.sae.org/standards/content/j3016_201806/

Table 1. Glossary:

OEDR

Object and Event Detection and Response. The subtasks of the UAS operation that include monitoring the environment; detecting, recognizing, and classifying objects and events and preparing to respond as needed, as well as executing an appropriate response to such objects and events (i.e., as needed to complete the operation and/or operation fallback).

Management of flight Fallback - The response by the pilot to either perform the UAS management of flight or achieve a minimal risk condition after occurrence of an UAS management of flight performance-relevant system failure(s) or upon operational design domain (ODD) exit, or the response by an AFS to achieve minimal risk condition, given the same circumstances.

ODD - Operational Design Domain. Operating conditions under which a given flight automation system or feature thereof is specifically designed to function, including, but not limited to, environmental, geographical, and time-of-day restrictions, and/or the requisite presence or absence of certain traffic characteristics.

AFS - Automated Flight System. The hardware and software that are collectively capable of performing the entire UAS operation on a sustained basis, regardless of whether it is limited to a specific operational design domain (ODD); this term is used specifically to describe a level 3, 4, or 5 flight automation system.

NOTE: In contrast to AFS, the generic term “flight automation system” refers to any level 1-5 system or feature that performs part or all of the UAS operation on a sustained basis.

OEDR - Object and Event Detection and Response

At all levels, a human retains command (→"in command" in the sense of having, or exercising direct authority), but not necessarily control (in the sense of directly acting/controlling).

Up to Level 3, the human being is the fallback option in the event of failures/ problems, and thus the human being in these levels still needs "classic manual flying skills". The ECA refers to this person as "Pilot-in-command" for these levels.

While Levels 4 and 5 no longer require a “pilot”, a human still retains the command authority. This authority is limited to the parameters (e.g. technical, regulatory, legal) of the respective mission to be flown. Hence the ECA expert-group refers to this individual as a “Mission-commander”.

The difference between Levels 4 and 5 lies in the Operational Design Domain (ODD). In Level 4 the ODD is limited, and the Mission-commanders need to be sufficiently familiar with these limitations to be able to adequately understand and execute their command authority. In the realm of aviation, such ODD limitations include background knowledge on navigation, flight performance, aviation law, etc. Consequently, Level 4 mission-commanders must have airmanship skills, commensurate with the respective limits of the particular operational design domain.

At Level 5 (full automation), the ODD becomes unlimited. Mission-commanders at this level may no longer require any aviation knowledge or skills to execute their command authority. In a highly automated system, this “command-authority” could end up being just an on/off switch, but the Mission commander would need to be sufficiently informed about the implications of the decision, including any consequences/liabilities (this could also be reflected via appropriate certification of the system).